REALTEST: Test and Reliability of nanoelectronic Systems

01.2006 - 07.2013, DFG-Project: WU 245/5-1, 5-2

Project Description

The continuing scaling of circuit technology enables the integration of complete systems and even complete compute clusters on a single chip. At the same time, the nano-electronic structures are subject to a growing number of defect mechanisms. The manufacturing process is much more sensitive to environmental influences and for very small structures quantum mechanical effects require even higher manufacturing precision. Furthermore, variation in process and material lead to variation in circuit parameters across space (the position on the chip) as well as time (due to ageing effects). The "International Technology Roadmap for Semiconductors" [SIA] estimates that by 2019 the feature size of process technology will reach 7nm, but only between 10% and 20% of chips will be defect free. In order to achieve economical yield rates, it is imperative that appropriate measures are taken, such as fault tolerance, redundancy, repair and reconfiguration.

In an ongoing trend, the percentage of flip-flops compared to combinatorial elements is growing in modules of free, random logic. This development is a consequence of the massive pipelining used to increase the operation frequency of integrated circuits and of the shorter and shorter critical paths in the combinatorial part. Additionally, many design techniques on architectural level such as speculation and instruction scheduling on the hardware layer, require larger register sets. And finally, the existing techniques for improved reliability, such as time and structural redundancy, lead to an increase in the number of memory elements in free random logic. Circuits with millions of flip-flops in free random logic are already commonplace in the industry [Kupp04].

The growth in terms of memory elements is not only observed in data paths but also in control dominated modules, for which regularity and minimized delay is getting more important than minimum area state encoding, which in turn leads to a growing portion of memory elements.

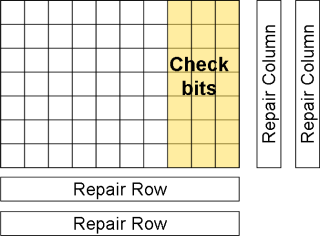

The flip-flops of an integrated circuit are, like its combinatorial elements, subject to the growing variations and the defect and failure mechanisms of nano-electronic circuits, which affect yield during manufacturing as well as reliability during operation. But most significant for flip-flops is their susceptibility with respect to environmental influences, such as particle radiation (e.g. protons), and will require protection mechanisms that improve reliability, mask faults and keep a feasible yield. For memory arrays with high regularity, there already exist methods that tackle these problems (Figure 1). Some current tech-niques realized in the industry include repair and reconfiguration, error detection and error correction through encoding, periodic refreshment of the data ("scrubbing") to pro-tect from fault accumulation and built-in self-test techniques with redundancy analysis and self-repair.

It will be necessary to adapt these methods to memory structures in free logic, because the growing application of power reduction techniques, such as clock gating, leads to a reduction in the number of concurrently switching elements and especially in concur-rently active flip-flops. Consequently, a large number of flip-flops have to hold their value over a longer time frame, which means, that memory elements are subject to the same long term influences and fault accumulation effects that are already significant for dynamic memory arrays. Therefore, it is imperative that periodic refreshment is introduced, as is already the case for memory arrays [Hell02].

The susceptibility to transient errors is significantly higher for memory elements than for combinatorial elements [Dodd03]. Because of the ongoing reduction in logic depth, it is expected that masking effects of most combinatorial faults will be reduced and that the soft error rate (SER) even of combinatorial elements will grow by orders of magnitude and approach the SER of unprotected memory elements [Skiv02], and also these effects will result in erroneous states to be detected by appropriate fault tolerance and redundancy mechanisms. These techniques are complemented by hardening both combinatorial elements and latches against transient faults.

At the same time, the continuous growth in the number of memory elements and the overhead, which is required to improve reliability, make the manufacturing test more difficult which is a dominant cost factor even today. For free logic, scan-path based test is the most wide-spread technique. Here, the test data is being serially shifted into the circuit and read-out, and in order to reduce test time multiple scan-paths are used at once, the test patterns are generated in form of a built-in self test directly on the chip or the test data is provided as a compressed data stream, which is decoded by on-chip circuitry. Similarly the test response is being compressed before it is sent to the tester. Figure 2 shows the basic principle of this embedded test technique.

These compression methods are meant to counter the long imminent problem of manufacturing test, that the external band-width of a chip to the test equipment is growing much slower than the size of the internal data that is required to achieve a complete fault coverage [Mitr05, Rajs05]. The growing percentage of flip-flops in free random logic and the significant redundancy, employed to increase reliability, aggravate this problem significantly and would lead to economically unfeasible test lengths and test times, if not accounted for.

The goal of this project is the development of a unified design methodology for memory elements in random logic that combines solutions for reliability, fault tolerance, online and offline test. To achieve this, each scan path (as in Figure 3) is partitioned into seg-ments of a certain length and each segment is extended by redundancy that allows for tolerance or repair of permanent faults in a way that it is still tolerant with respect to transient faults.

A scan path can be seen as a one-dimensional one-bit memory, which lends it to re-spective memory test techniques. For regular memory arrays, periodic test, online test and transparent test have been rigorously analyzed. Some of these test methods can be adapted to the concept of scan paths. But repeated read-out and write-back would significantly impact availability of the flip-flops to regular system operation and therefore be not feasible. Because of this, it is promising to implement the test technique for transparent, periodic self-test, already implemented for memory arrays. A simple logic calculates a residual characteristic (Figure 3), which allows for keeping the contents of the scan path consistent and enables periodic consistency checking.

The additional hardware, which is integrated for this online test scheme, will also be used for test response compression. Only the calculated characteristic has to be evalu-ated, from which the incorrect circuit response can be implied. A complete scan-out of the (redundant) circuit response is not required for this solution, and test time is reduced significantly without any additional hardware overhead. For the test pattern (stimuli) the currently known test data compression techniques can still be used.

Bibliography:

| [Dodd03] | P. E. Dodd and L. W. Massengill, "Basic mechanisms and modeling of single-event upset in digital micro-electronics", IEEE Transactions on Nuclear Science, 50 (3), pp. 583-602, June 2003 |

| [Hell02] | S. Hellebrand, H.-J. Wunderlich, A. A. Ivaniuk, Y. V. Klimets, and V. N. Yarmolik, "Efficient online and offline testing of embedded DRAMs", IEEE Trans-actions on Computers, 51 (7), pp. 801-809, 2002 |

| [SIA] | Semiconductor Industry Association, "International technology roadmap for semiconductors", Technical Report, 2003, available at: http://public.itrs.net/public.itrs.net |

| [Kupp04] | R. Kuppuswamy, P. DesRosier, D. Feltham, R. Sheikh, and P. Thadikaran, "Full hold-scan systems in microprocessors: Cost/benefit analysis", Intel Tech-nology Journal, 8 (1), pp. 63-72, Feb. 2004 |

| [Mitr05] | S. Mitra, S. S. Lumetta, M. Mitzenmacher, and N. Patil, "X-Tolerant Test Re-sponse Compaction", IEEE Design & Test of Computers, 22 (6), pp. 566-574, 2005 |

| [Rajs05] | J. Rajski, J. Tyszer, C. Wang, and S. M. Reddy, "Finite memory test response compactors for embedded test applications", IEEE Trans. on CAD of Inte-grated Circuits and Systems, 24 (4), pp. 622-634, 2005 |

| [Nico96] | M. Nicolaidis, "Theory of Transparent BIST for RAMs", IEEE Trans. on Com-puter, 45 (10), pp. 1141-1156, 1996 |

| [Koma04] | Y. Komatsu, Y. Arima, T. Fujimoto, T. Yamashita, and K. Ishibashi, "A soft-error hardened latch scheme for soc in a 90nm technology and beyond", Pro-ceedings IEEE Custom Integrated Circuits Conference (CICC'04), pp. 329-332,Orlando, FL, USA, Sep 2004 |

| [Shiv02] | P. Shivakumar, M. Kistler, S. W. Keckler, D. Burger, and L. Alvisi, "Modeling the effect of technology trends on the soft error rate of combinational logic", Proceedings International Conference on Dependable Systems and Networks (DSN'02), Bethesda, MD, USA, pp. 389-398, June 2002 |

Journals and Conference Proceedings

2014

- SAT-Based ATPG beyond Stuck-at Fault Testing. Sybille Hellebrand and Hans-Joachim Wunderlich. it - Information Technology 56, 4 (July 2014), pp. 165--172. DOI: https://doi.org/10.1515/itit-2013-1043

- SAT-Based ATPG beyond Stuck-at Fault Testing. Sybille Hellebrand and Hans-Joachim Wunderlich. it - Information Technology 56, 4 (July 2014), pp. 165--172. DOI: https://doi.org/10.1515/itit-2013-1043

2013

- Efficient Variation-Aware Statistical Dynamic Timing Analysis for Delay Test Applications. Marcus Wagner and Hans-Joachim Wunderlich. In Proceedings of the Conference on Design, Automation and Test in Europe (DATE’13), Grenoble, France, 2013, pp. 276--281. DOI: https://doi.org/10.7873/DATE.2013.069

- Efficient Variation-Aware Statistical Dynamic Timing Analysis for Delay Test Applications. Marcus Wagner and Hans-Joachim Wunderlich. In Proceedings of the Conference on Design, Automation and Test in Europe (DATE’13), Grenoble, France, 2013, pp. 276--281. DOI: https://doi.org/10.7873/DATE.2013.069

2012

- Variation-Aware Fault Grading. A. Czutro; Michael E. Imhof; J. Jiang; Abdullah Mumtaz; M. Sauer; Bernd Becker; Ilia Polian and Hans-Joachim Wunderlich. In Proceedings of the 21st IEEE Asian Test Symposium (ATS’12), Niigata, Japan, 2012, pp. 344--349. DOI: https://doi.org/10.1109/ATS.2012.14

- Built-in Self-Diagnosis Exploiting Strong Diagnostic Windows in Mixed-Mode Test. Alejandro Cook; Sybille Hellebrand and Hans-Joachim Wunderlich. In Proceedings of the 17th IEEE European Test Symposium (ETS’12), Annecy, France, 2012, pp. 146--151. DOI: https://doi.org/10.1109/ETS.2012.6233025

- A Pseudo-Dynamic Comparator for Error Detection in Fault Tolerant Architectures. Duc Anh Tran; Arnaud Virazel; Alberto Bosio; Luigi Dilillo; Patrick Girard; Aida Todri; Michael E. Imhof and Hans-Joachim Wunderlich. In Proceedings of the 30th IEEE VLSI Test Symposium (VTS’12), Hyatt Maui, Hawaii, USA, 2012, pp. 50--55. DOI: https://doi.org/10.1109/VTS.2012.6231079

- Built-in Self-Diagnosis Targeting Arbitrary Defects with Partial Pseudo-Exhaustive Test. Alejandro Cook; Sybille Hellebrand; Michael E. Imhof; Abdullah Mumtaz and Hans-Joachim Wunderlich. In Proceedings of the 13th IEEE Latin-American Test Workshop (LATW’12), Quito, Ecuador, 2012, pp. 1--4. DOI: https://doi.org/10.1109/LATW.2012.6261229

- Exact Stuck-at Fault Classification in Presence of Unknowns. Stefan Hillebrecht; Michael A. Kochte; Hans-Joachim Wunderlich and Bernd Becker. In Proceedings of the 17th IEEE European Test Symposium (ETS’12), Annecy, France, 2012, pp. 98--103. DOI: https://doi.org/10.1109/ETS.2012.6233017

- Built-in Self-Diagnosis Targeting Arbitrary Defects with Partial Pseudo-Exhaustive Test. Alejandro Cook; Sybille Hellebrand; Michael E. Imhof; Abdullah Mumtaz and Hans-Joachim Wunderlich. In Proceedings of the 13th IEEE Latin-American Test Workshop (LATW’12), Quito, Ecuador, 2012, pp. 1--4. DOI: https://doi.org/10.1109/LATW.2012.6261229

- Exact Stuck-at Fault Classification in Presence of Unknowns. Stefan Hillebrecht; Michael A. Kochte; Hans-Joachim Wunderlich and Bernd Becker. In Proceedings of the 17th IEEE European Test Symposium (ETS’12), Annecy, France, 2012, pp. 98--103. DOI: https://doi.org/10.1109/ETS.2012.6233017

- Variation-Aware Fault Grading. A. Czutro; Michael E. Imhof; J. Jiang; Abdullah Mumtaz; M. Sauer; Bernd Becker; Ilia Polian and Hans-Joachim Wunderlich. In Proceedings of the 21st IEEE Asian Test Symposium (ATS’12), Niigata, Japan, 2012, pp. 344--349. DOI: https://doi.org/10.1109/ATS.2012.14

- Built-in Self-Diagnosis Exploiting Strong Diagnostic Windows in Mixed-Mode Test. Alejandro Cook; Sybille Hellebrand and Hans-Joachim Wunderlich. In Proceedings of the 17th IEEE European Test Symposium (ETS’12), Annecy, France, 2012, pp. 146--151. DOI: https://doi.org/10.1109/ETS.2012.6233025

- A Pseudo-Dynamic Comparator for Error Detection in Fault Tolerant Architectures. Duc Anh Tran; Arnaud Virazel; Alberto Bosio; Luigi Dilillo; Patrick Girard; Aida Todri; Michael E. Imhof and Hans-Joachim Wunderlich. In Proceedings of the 30th IEEE VLSI Test Symposium (VTS’12), Hyatt Maui, Hawaii, USA, 2012, pp. 50--55. DOI: https://doi.org/10.1109/VTS.2012.6231079

2011

- Robuster Selbsttest mit Diagnose. Alejandro Cook; Sybille Hellebrand; Thomas Indlekofer and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 48--53.

- Embedded Test for Highly Accurate Defect Localization. Abdullah Mumtaz; Michael E. Imhof; Stefan Holst and Hans-Joachim Wunderlich. In Proceedings of the 20th IEEE Asian Test Symposium (ATS’11), New Delhi, India, 2011, pp. 213--218. DOI: https://doi.org/10.1109/ATS.2011.60

- Diagnostic Test of Robust Circuits. Alejandro Cook; Sybille Hellebrand; Thomas Indlekofer and Hans-Joachim Wunderlich. In Proceedings of the 20th IEEE Asian Test Symposium (ATS’11), New Delhi, India, 2011, pp. 285--290. DOI: https://doi.org/10.1109/ATS.2011.55

- Korrektur transienter Fehler in eingebetteten Speicherelementen. Michael E. Imhof and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 76--83.

- Eingebetteter Test zur hochgenauen Defekt-Lokalisierung. Abdullah Mumtaz; Michael E. Imhof; Stefan Holst and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 43--47.

- Embedded Test for Highly Accurate Defect Localization. Abdullah Mumtaz; Michael E. Imhof; Stefan Holst and Hans-Joachim Wunderlich. In Proceedings of the 20th IEEE Asian Test Symposium (ATS’11), New Delhi, India, 2011, pp. 213--218. DOI: https://doi.org/10.1109/ATS.2011.60

- Soft Error Correction in Embedded Storage Elements. Michael E. Imhof and Hans-Joachim Wunderlich. In Proceedings of the 17th IEEE International On-Line Testing Symposium (IOLTS’11), Athens, Greece, 2011, pp. 169--174. DOI: https://doi.org/10.1109/IOLTS.2011.5993832

- Soft Error Correction in Embedded Storage Elements. Michael E. Imhof and Hans-Joachim Wunderlich. In Proceedings of the 17th IEEE International On-Line Testing Symposium (IOLTS’11), Athens, Greece, 2011, pp. 169--174. DOI: https://doi.org/10.1109/IOLTS.2011.5993832

- Korrektur transienter Fehler in eingebetteten Speicherelementen. Michael E. Imhof and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 76--83.

- Variation-Aware Fault Modeling. Fabian Hopsch; Bernd Becker; Sybille Hellebrand; Ilia Polian; Bernd Straube; Wolfgang Vermeiren and Hans-Joachim Wunderlich. SCIENCE CHINA Information Sciences 54, 9 (September 2011), pp. 1813--1826. DOI: https://doi.org/10.1007/s11432-011-4367-8

- Diagnostic Test of Robust Circuits. Alejandro Cook; Sybille Hellebrand; Thomas Indlekofer and Hans-Joachim Wunderlich. In Proceedings of the 20th IEEE Asian Test Symposium (ATS’11), New Delhi, India, 2011, pp. 285--290. DOI: https://doi.org/10.1109/ATS.2011.55

- Eingebetteter Test zur hochgenauen Defekt-Lokalisierung. Abdullah Mumtaz; Michael E. Imhof; Stefan Holst and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 43--47.

- Variation-Aware Fault Modeling. Fabian Hopsch; Bernd Becker; Sybille Hellebrand; Ilia Polian; Bernd Straube; Wolfgang Vermeiren and Hans-Joachim Wunderlich. SCIENCE CHINA Information Sciences 54, 9 (September 2011), pp. 1813--1826. DOI: https://doi.org/10.1007/s11432-011-4367-8

- Robuster Selbsttest mit Diagnose. Alejandro Cook; Sybille Hellebrand; Thomas Indlekofer and Hans-Joachim Wunderlich. In 5. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’11), Hamburg-Harburg, Germany, 2011, pp. 48--53.

2008

- Signature Rollback – A Technique for Testing Robust Circuits. Uranmandakh Amgalan; Christian Hachmann; Sybille Hellebrand and Hans-Joachim Wunderlich. In Proceedings of the 26th IEEE VLSI Test Symposium (VTS’08), San Diego, California, USA, 2008, pp. 125--130. DOI: https://doi.org/10.1109/VTS.2008.34

- Erkennung von transienten Fehlern in Schaltungen mit reduzierter Verlustleistung; Detection of transient faults in circuits with reduced power dissipation. Michael E. Imhof; Hans-Joachim Wunderlich and Christian G. Zoellin. In 2. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’08), Ingolstadt, Germany, 2008, pp. 107--114.

- Integrating Scan Design and Soft Error Correction in Low-Power Applications. Michael E. Imhof; Hans-Joachim Wunderlich and Christian G. Zoellin. In Proceedings of the 14th IEEE International On-Line Testing Symposium (IOLTS’08), Rhodes, Greece, 2008, pp. 59--64. DOI: https://doi.org/10.1109/IOLTS.2008.31

- Erkennung von transienten Fehlern in Schaltungen mit reduzierter Verlustleistung; Detection of transient faults in circuits with reduced power dissipation. Michael E. Imhof; Hans-Joachim Wunderlich and Christian G. Zoellin. In 2. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’08), Ingolstadt, Germany, 2008, pp. 107--114.

- Signature Rollback – A Technique for Testing Robust Circuits. Uranmandakh Amgalan; Christian Hachmann; Sybille Hellebrand and Hans-Joachim Wunderlich. In Proceedings of the 26th IEEE VLSI Test Symposium (VTS’08), San Diego, California, USA, 2008, pp. 125--130. DOI: https://doi.org/10.1109/VTS.2008.34

- Integrating Scan Design and Soft Error Correction in Low-Power Applications. Michael E. Imhof; Hans-Joachim Wunderlich and Christian G. Zoellin. In Proceedings of the 14th IEEE International On-Line Testing Symposium (IOLTS’08), Rhodes, Greece, 2008, pp. 59--64. DOI: https://doi.org/10.1109/IOLTS.2008.31

2007

- A Refined Electrical Model for Particle Strikes and its Impact on SEU Prediction. Sybille Hellebrand; Christian G. Zoellin; Hans-Joachim Wunderlich; Stefan Ludwig; Torsten Coym and Bernd Straube. In Proceedings of the 22nd IEEE International Symposium on Defect and Fault Tolerance in VLSI Systems (DFT’07), Rome, Italy, 2007, pp. 50--58. DOI: https://doi.org/10.1109/DFT.2007.43

- Programmable Deterministic Built-in Self-test. Abdul-Wahid Hakmi; Hans-Joachim Wunderlich; Christian G. Zoellin; Andreas Glowatz; Friedrich Hapke; Juergen Schloeffel and Laurent Souef. In Proceedings of the International Test Conference (ITC’07), Santa Clara, California, USA, 2007, pp. 1--9. DOI: https://doi.org/10.1109/TEST.2007.4437611

- Testing and Monitoring Nanoscale Systems - Challenges and Strategies for Advanced Quality Assurance (Invited Paper). Sybille Hellebrand; Christian G. Zoellin; Hans-Joachim Wunderlich; Stefan Ludwig; Torsten Coym and Bernd Straube. In Proceedings of 43rd International Conference on Microelectronics, Devices and Material with the Workshop on Electronic Testing (MIDEM’07), Bled, Slovenia, 2007, pp. 3--10.

- Testing and Monitoring Nanoscale Systems - Challenges and Strategies for Advanced Quality Assurance (Invited Paper). Sybille Hellebrand; Christian G. Zoellin; Hans-Joachim Wunderlich; Stefan Ludwig; Torsten Coym and Bernd Straube. In Proceedings of 43rd International Conference on Microelectronics, Devices and Material with the Workshop on Electronic Testing (MIDEM’07), Bled, Slovenia, 2007, pp. 3--10.

- A Refined Electrical Model for Particle Strikes and its Impact on SEU Prediction. Sybille Hellebrand; Christian G. Zoellin; Hans-Joachim Wunderlich; Stefan Ludwig; Torsten Coym and Bernd Straube. In Proceedings of the 22nd IEEE International Symposium on Defect and Fault Tolerance in VLSI Systems (DFT’07), Rome, Italy, 2007, pp. 50--58. DOI: https://doi.org/10.1109/DFT.2007.43

- Programmable Deterministic Built-in Self-test. Abdul-Wahid Hakmi; Hans-Joachim Wunderlich; Christian G. Zoellin; Andreas Glowatz; Friedrich Hapke; Juergen Schloeffel and Laurent Souef. In Proceedings of the International Test Conference (ITC’07), Santa Clara, California, USA, 2007, pp. 1--9. DOI: https://doi.org/10.1109/TEST.2007.4437611

- Test und Zuverlässigkeit nanoelektronischer Systeme. Bernd Becker; Ilia Polian; Sybille Hellebrand; Bernd Straube and Hans-Joachim Wunderlich. In 1. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’07), Munich, Germany, 2007, pp. 139--140.

- Test und Zuverlässigkeit nanoelektronischer Systeme. Bernd Becker; Ilia Polian; Sybille Hellebrand; Bernd Straube and Hans-Joachim Wunderlich. In 1. GMM/GI/ITG-Fachtagung Zuverlässigkeit und Entwurf (ZuE’07), Munich, Germany, 2007, pp. 139--140.

2006

- DFG-Projekt RealTest - Test und Zuverlässigkeit nanoelektronischer Systeme; DFG-Project – Test and Reliability of Nano-Electronic Systems. Bernd Becker; Ilia Polian; Sybille Hellebrand; Bernd Straube and Hans-Joachim Wunderlich. it - Information Technology 48, 5 (October 2006), pp. 304--311. DOI: https://doi.org/10.1524/itit.2006.48.5.304

- DFG-Projekt RealTest - Test und Zuverlässigkeit nanoelektronischer Systeme; DFG-Project – Test and Reliability of Nano-Electronic Systems. Bernd Becker; Ilia Polian; Sybille Hellebrand; Bernd Straube and Hans-Joachim Wunderlich. it - Information Technology 48, 5 (October 2006), pp. 304--311. DOI: https://doi.org/10.1524/itit.2006.48.5.304

Workshop Contributions

2008

- Ein verfeinertes elektrisches Modell für Teilchentreffer und dessen Auswirkung auf die Bewertung der Schaltungsempfindlichkeit. Torsten Coym; Sybille Hellebrand; Stefan Ludwig; Bernd Straube; Hans-Joachim Wunderlich and Christian Zöllin. In 20th ITG/GI/GMM Workshop “Testmethoden und Zuverlässigkeit von Schaltungen und Systemen” (TuZ’08), Wien, Austria, 2008, pp. 153--157.

- Integrating Scan Design and Soft Error Correction in Low-Power Applications. Michael E. Imhof; Hans-Joachim Wunderlich and Christian Zöllin. In 1st International Workshop on the Impact of Low-Power Design on Test and Reliability (LPonTR’08), Verbania, Italy, 2008.

- Ein verfeinertes elektrisches Modell für Teilchentreffer und dessen Auswirkung auf die Bewertung der Schaltungsempfindlichkeit. Torsten Coym; Sybille Hellebrand; Stefan Ludwig; Bernd Straube; Hans-Joachim Wunderlich and Christian Zöllin. In 20th ITG/GI/GMM Workshop “Testmethoden und Zuverlässigkeit von Schaltungen und Systemen” (TuZ’08), Wien, Austria, 2008, pp. 153--157.

- Integrating Scan Design and Soft Error Correction in Low-Power Applications. Michael E. Imhof; Hans-Joachim Wunderlich and Christian Zöllin. In 1st International Workshop on the Impact of Low-Power Design on Test and Reliability (LPonTR’08), Verbania, Italy, 2008.

Hans-Joachim Wunderlich

Prof. Dr. rer. nat. habil.Research Group Computer Architecture,

retired